Within 5 days, ChatGPT hit over 1 million users. It took Instagram 2.5 months to do that.

But, why all the hype around another chatbot? They’ve been used for years now haven’t they? Well, not quite like this.

It’s like the chatbots you’ve seen online, but on steroids.

It was built by OpenAI using data from thousands of online sources, including Reddit, to make its responses seem more human. It’s a language learning model which means it’s learned to continually predict what the next word in a sequence should be. Unlike previous chatbots, however, ChatGPT was also trained using human feedback. Meaning it knows what language people expect when asking questions. It can also remember what you asked or said earlier in the chat meaning you can follow up with things. Basically, you can have a seemingly human conversation with it.

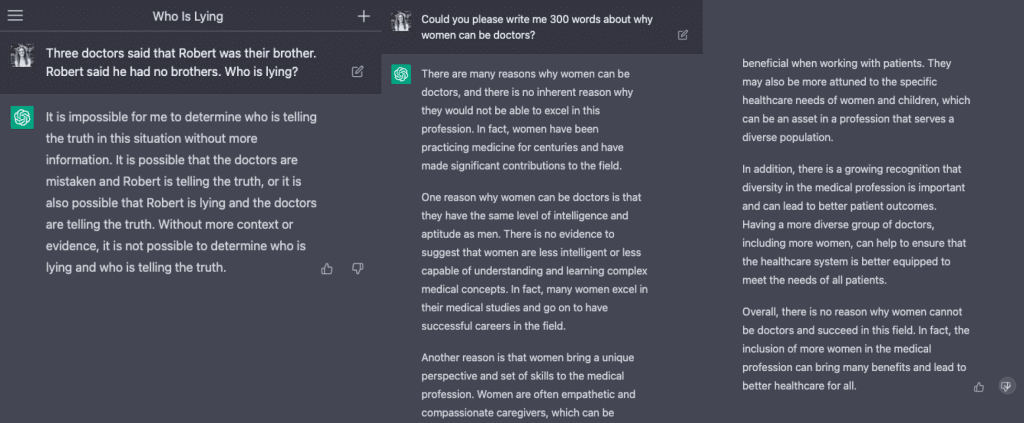

But, it does have some key weaknesses. One is that it lacks information released online post-2021, so some of its responses may not include the most up-to-date data. And, as with every other AI program available, it still has issues with biased, surface-level, incomplete, or even potentially harmful responses.

It can! We’ll get to the morality of this later on, but the simple answer is yes.

Through machine learning, the chatbot essentially is able to recreate content it has seen online and tweak it to your specifications.

Ask it to write any number of words on a certain topic and it will. Ask it to write attention-grabbing headlines for LinkedIn and it will. Ask it to create a well-balanced meal plan to hit your weekly macros and it will.

When you ask it to do a vague action, you’ll likely get a vague response.

“Give me ideas for blog posts” – it’ll give you ideas, but they won’t necessarily align with what you want to talk about. It has merely used its knowledge to regurgitate various blog article ideas its seen.

Instead, try to give the program specific examples or formulas to expand on. By adding qualifiers and descriptors, you can ask it to create very unique (and actually quite well-written) content.

Alongside potentially harmful biases existing within this and other AI tools, the moral and ethical use of these tools is a big hurdle for the industry to face. And the answer might not always be clear-cut.

Selling your copywriting services to businesses but then using ChatGPT to write for you? Definitely over the line.

Using ChatGPT to write your uni assignment? Over the line.

Using it to write your own blog posts? That might depend.

While we may easily agree on some tasks it shouldn’t be used for, others might be harder to see eye-to-eye on.

We can agree that there’s certainly value in AI tools. They can help spark ideas and answer questions that everyday users have. As a chatbot for websites and apps, it can provide a much more user-friendly experience. However, beyond this there are implications for both our industry and society at large, which will necessitate changes in practice but also (in all likelihood) at a legislative level. And quickly. What a time to be alive!